AI agents are getting smarter, faster, and much more useful. They can handle customer conversations, manage workflows, review information, and even take actions that used to belong to human teams. That is exactly why trust has become such a big issue.

The moment an AI system starts doing more than suggesting ideas, the stakes change. A chatbot giving a rough answer is one thing. An AI agent that can move money, negotiate terms, trigger workflows, or act on behalf of a business is something else entirely. At that point, the real question is no longer just whether the technology works. The real question is whether businesses can trust it enough to use it at scale.

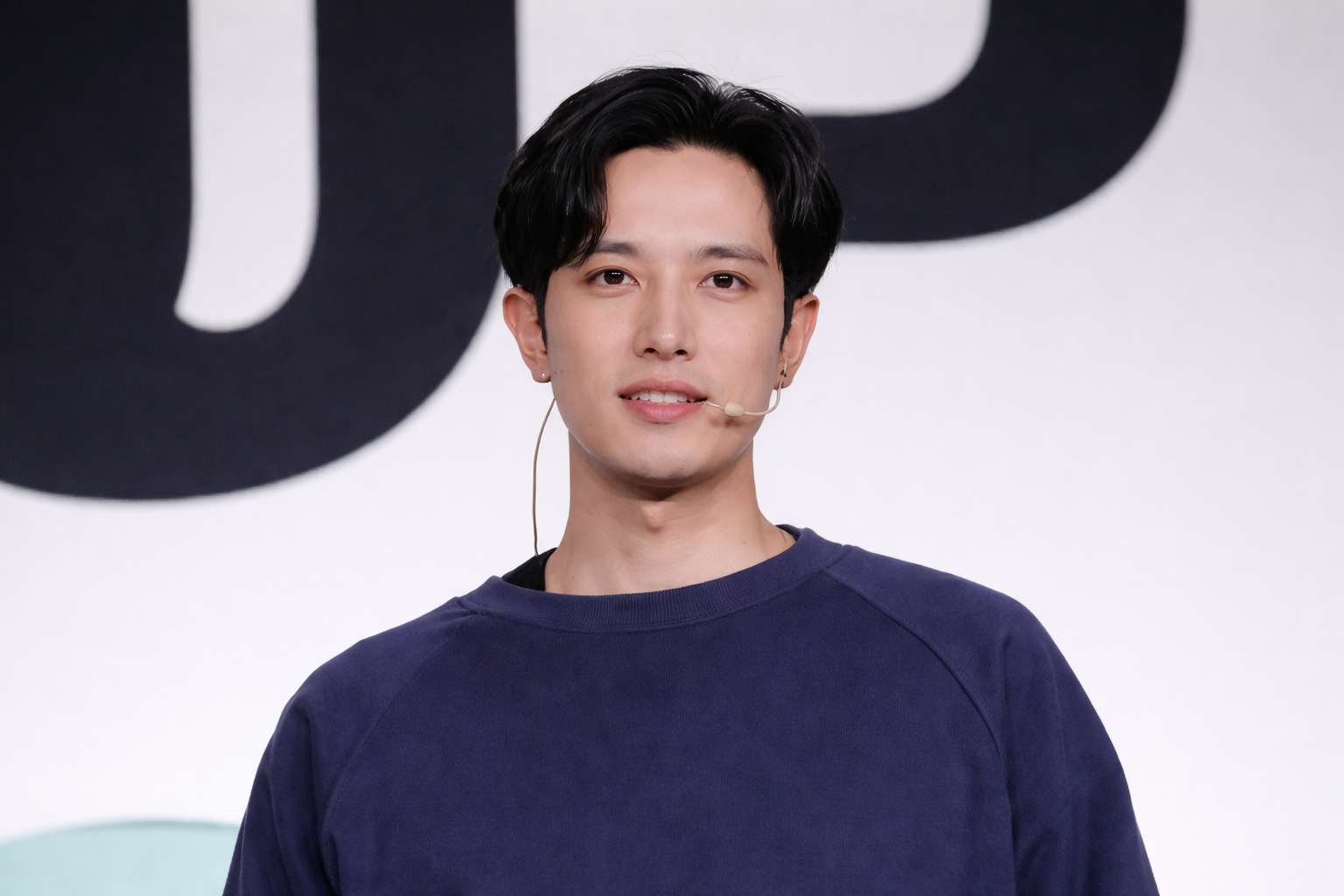

That is the space Fabian Amherd stepped into with Mount. Instead of building yet another AI product focused only on speed or automation, he helped build a company around a much harder problem: how to make AI agents safe enough, measurable enough, and insurable enough for real-world deployment.

Why AI agents created a trust problem before they created a mainstream market

A lot of the AI conversation has focused on capability. People want to know how much an agent can do, how efficiently it can work, and how much labor it can replace. But for businesses, capability is only half the story.

The bigger concern is what happens when things go wrong.

An AI agent can misread instructions, take actions it should never take, expose sensitive information, or behave unpredictably once it is connected to tools, systems, and live data. In a controlled demo, those risks can look small. In production, they become legal, operational, and financial problems.

That is what makes trust such an important bottleneck. Companies may be excited about autonomous AI, but they still need answers to uncomfortable questions. What is the actual risk of deployment? How should that risk be tested? Who takes responsibility when an agent fails? What kind of standards should apply before an AI system goes live?

Fabian Amherd and the team at Mount built their company around that gap. They recognized that businesses do not just need more powerful AI agents. They need a framework that helps them understand risk before those agents are trusted with meaningful responsibilities.

Fabian Amherd’s path before Mount

Founders usually build best when they understand the problem from both a technical and practical angle. Fabian Amherd’s background makes that part of the Mount story especially relevant.

Before Mount, he studied computer science at ETH Zürich with a focus on machine learning. That matters because Mount is not built around surface-level AI hype. It sits in a category where technical depth actually matters. You cannot build a company around evaluating and underwriting AI risk unless you understand how these systems behave in the real world.

He also worked at MESH, an ETH robotics spin-off, where he built and deployed custom CNNs for real-time object detection in industrial robotics. That kind of work tends to shape a founder’s view of deployment very differently. When you work on systems that have to perform in real environments, you learn quickly that real-world behavior is rarely as neat as a lab result.

Fabian also founded and ran a web development company for several years, starting at age 16. That part of the story matters too. It suggests he was not only technical, but also used to building from scratch, solving practical problems, and working close to execution.

Put those pieces together and Mount starts to make more sense. Fabian Amherd did not arrive at the AI agent space as someone chasing a trend. He came into it with a background in machine learning, deployed systems, and hands-on building.

The problem Mount saw earlier than most people

Mount is built around a simple idea that is easy to understand and hard to solve. As AI systems move from copilots to autonomous operators, the old way of thinking about software risk starts to break down.

Traditional insurers are not built for this category. They understand familiar forms of business risk. They know how to think about cyber incidents, operational failures, and some kinds of liability. But AI agents introduce a new blend of uncertainty. They act dynamically, connect to outside tools, respond to live prompts, and can create consequences that are hard to predict using older underwriting models.

That leaves a major gap in the market.

Businesses want the upside of AI automation, but many do not want to absorb open-ended liability. Insurers, on the other hand, may hesitate because they do not have the data, standards, or frameworks needed to assess deployed AI systems properly. So the market stalls in the middle.

This is the trust problem Mount is trying to solve.

Instead of treating AI risk as a side issue, Mount treats it as the product category itself. The company focuses on assessing risk, improving security, certifying readiness, and then supporting deployment with insurance. That combination is what makes the company stand out.

How Mount is building a trust layer for AI agents

Mount’s model is interesting because it does not stop at identifying risk. It tries to cover the full chain of trust.

The first layer is assessment. Mount positions itself around testing AI agents, scanning for vulnerabilities, and scoring operational risk. That gives companies a way to move from vague fear to something more concrete. Instead of simply saying an AI agent feels risky, they can start looking at exposure in a structured way.

The second layer is security improvement. That matters because testing alone is not enough. If a company finds weak points but has no path to strengthening controls, the insight has limited value. Mount’s framing suggests the company wants to help customers reduce risk, not just document it.

The third layer is certification. This is where the company introduces ADR, or Agent Deployment Readiness, which it describes as a SOC 2-like framework for AI agents. That comparison is smart because it gives people a familiar reference point. In software and security, standards help buyers feel more comfortable. They make trust easier to communicate inside procurement, legal, compliance, and leadership teams.

The fourth layer is insurance. This may be the most important part of the story. A lot of startups talk about security, observability, or governance. Mount goes further by building around the financial side of trust. If AI agents are going to operate in ways that can create real liability, then businesses will eventually need more than technical safeguards. They will also want coverage.

That full-stack approach is what gives Mount a stronger narrative than a typical security startup. It is not only trying to make AI agents safer. It is trying to make them insurable and easier to adopt in serious business environments.

Why ADR could become an important concept

One of the more interesting parts of the Mount story is ADR, short for Agent Deployment Readiness.

That phrase works because it captures something the market still lacks. Businesses do not just need proof that an AI model is impressive. They need proof that an AI agent is ready to operate in a live environment with acceptable risk.

That is a very different standard.

A capable system is not always a trustworthy one. A fast system is not always a controllable one. And an autonomous system is definitely not something most enterprises will deploy broadly without a layer of evidence around reliability, safeguards, and oversight.

By framing ADR as something similar to SOC 2 for AI agents, Mount is trying to create language that businesses can use internally. That matters more than it may seem. New categories often grow when they find language that buyers can understand quickly.

If ADR gains traction, it could help companies move AI agents from experimental pilots to production environments with more confidence. It could also help create a benchmark for what responsible deployment should look like as the agent economy grows.

Why Fabian Amherd and Mount fit the moment

Timing matters in startups, and Mount appears to be entering the market at a time when AI adoption is becoming more serious. The conversation is shifting from novelty to accountability.

Early on, businesses were mostly asking what AI could do. Now they are also asking how to deploy it safely, how to manage exposure, and how to justify adoption to stakeholders who care about security, compliance, and financial risk.

That shift gives Mount a strong opening.

Fabian Amherd’s background gives the company technical credibility, while the product story gives it a clear market angle. Mount is not trying to win by promising the flashiest AI demo. It is trying to become part of the trust infrastructure behind AI agent adoption.

That is a powerful place to build.

The company’s Y Combinator backing adds another layer of momentum. It signals that investors see the category as important and believe Mount has a compelling way to address it. For an early-stage startup, that kind of validation can help attract attention, talent, and early conversations with companies that already see AI agents as part of their future.

Why this story matters beyond one startup

Fabian Amherd and Mount represent a broader shift happening across the AI market. The next wave of opportunity may not belong only to the companies building smarter agents. It may also belong to the companies building the systems that make those agents trusted enough for real deployment.

That is where Mount stands out.

It sits at the intersection of AI risk, AI security, certification, and insurance. It speaks to a problem that will likely grow alongside the agent economy rather than disappear. The more useful AI agents become, the more important trust becomes. And the more important trust becomes, the more valuable companies like Mount could be.

Fabian Amherd’s role in that story is what makes this founder angle strong. His background in machine learning and deployed systems gave him the perspective to see that trust was not a side issue. It was the missing layer.

In many ways, that is what makes Mount interesting. It is not just another AI startup riding the market. It is a company built around one of the most important questions the market still has not fully solved.