AI coding agents are getting better at writing code, fixing bugs, and speeding up day to day development work. But the moment that work touches a live database, the mood changes. What feels fast and exciting in an editor can turn risky very quickly when schema changes, migrations, backfills, or cleanup jobs are involved.

That gap between AI speed and database safety is where Vikram Chennai built Ardent.

Ardent is focused on a problem a lot of modern engineering teams already feel. AI can help write database code, but teams still need a safe way to test whether that code actually works against real data. Staging environments are often outdated, seed scripts rarely capture production complexity, and nobody wants an agent experimenting against a real production database. Ardent’s answer is simple to describe but powerful in practice: give every coding agent an isolated copy of the database so it can test its work without creating production risk.

Why databases became a weak spot in the AI coding era

The rise of AI developer tools changed expectations almost overnight. Engineers can now generate code faster, explore solutions faster, and move through routine tasks with much less manual effort. Tools such as Cursor and Claude Code pushed that shift even further by making agent style workflows feel more normal inside real engineering teams.

But databases have always played by different rules.

You can usually roll back an app level bug with less pain than a broken migration, a bad backfill, or a schema change that hits production too early. Database mistakes tend to be slower to detect, harder to unwind, and far more expensive once real data is involved. That is exactly why database work still makes teams nervous, even when they are comfortable using AI in other parts of the stack.

This is also why old workflows started to feel weaker as AI tools improved. Shared staging databases create conflicts. Fake seed data misses edge cases. Static test environments drift away from production reality. So even if an AI coding agent writes something that looks correct, teams still have to ask the same question: will it actually work on the real database without breaking something important?

That question sits at the center of Ardent’s product story.

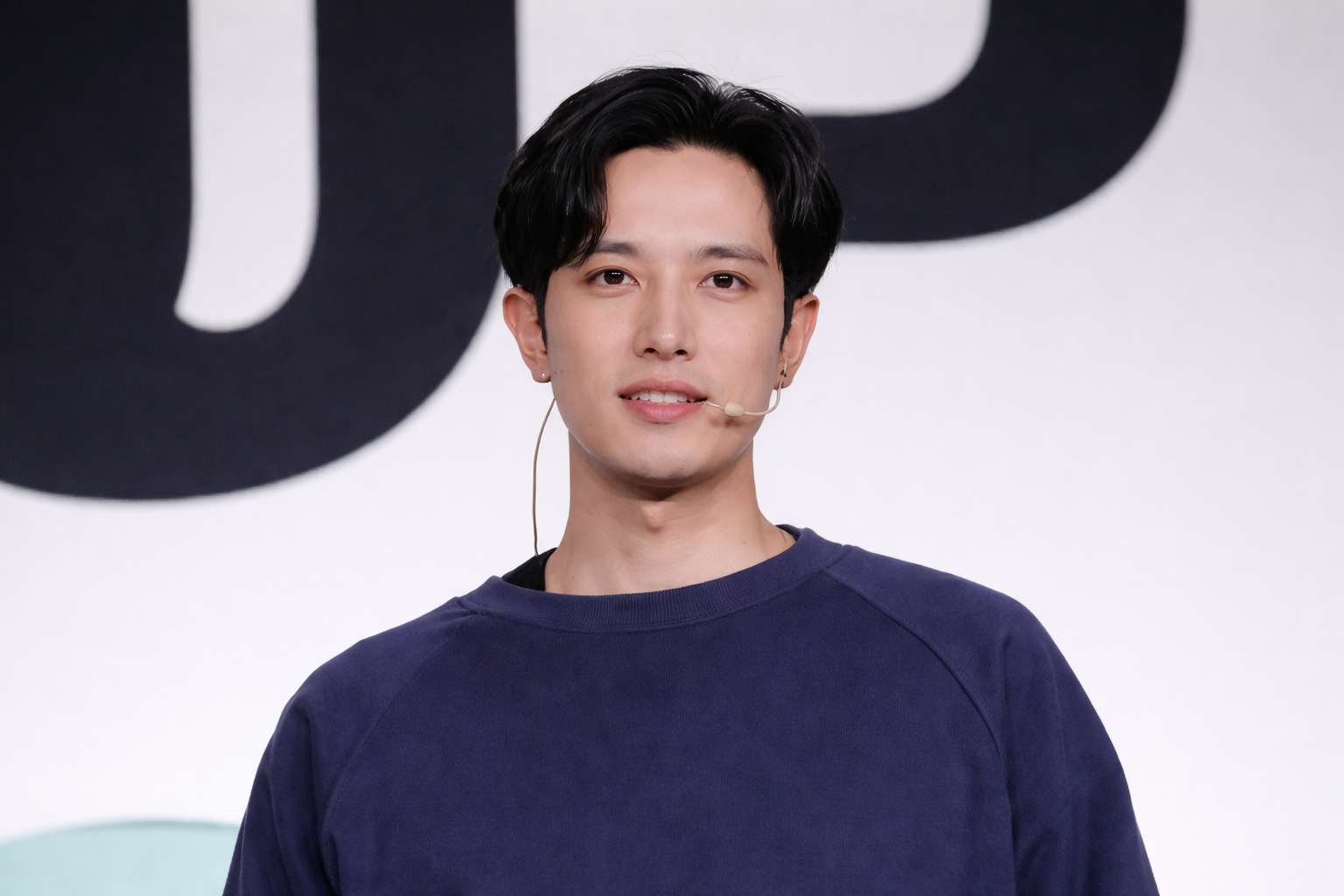

Who Vikram Chennai is and why Ardent makes sense for his background

Vikram Chennai is the founder of Ardent, a Y Combinator Spring 2026 company in the data engineering and infrastructure space. Public company information also describes him as a former machine learning engineer with a Cornell computer science background, which makes Ardent feel like a natural fit rather than a random startup idea.

That matters because Ardent is not trying to win attention with a vague AI promise. It is built around a very specific technical bottleneck. The company is focused on database sandboxes for agents, especially around Postgres workflows where speed, realism, and isolation matter a lot.

A founder’s background does not automatically guarantee product success, but it often shapes what kind of problems they notice early. In Vikram Chennai’s case, Ardent reflects a strong read on where AI assisted development still feels fragile. The bottleneck is not always code generation itself. A lot of the anxiety lives in verification, especially when databases are involved.

What Ardent actually does

Ardent lets teams create instant, isolated copies of a production Postgres database so coding agents can test their database work on real data without touching production.

That is the core idea, and it is a strong one.

Instead of asking an engineering team to trust seed files, limited staging environments, or rough approximations of the real system, Ardent gives them a 1:1 style environment where migrations, schema changes, database cleanup, validation work, and backfills can be tested more realistically. The company positions this as a way to let coding agents verify their work before anything reaches production.

Ardent also leans hard into speed. Its public messaging says copies of any Postgres database can be created in under 6 seconds, regardless of size. That matters because safety alone is not enough. If a safe workflow is slow, teams will work around it. If it is fast enough to fit naturally into engineering habits, it has a much better chance of becoming part of the actual workflow.

The real problem Ardent is solving

At first glance, Ardent might look like a database cloning tool. But the bigger problem it is solving is confidence.

Engineering teams want faster delivery, especially now that AI coding agents can accelerate routine work. At the same time, nobody wants more uncertainty around the database layer. That is where a lot of expensive problems happen.

Think about the usual choices teams make today.

One option is to test against a shared staging database, which often creates noise, conflicts, and delays. Another is to rely on seed scripts and synthetic fixtures, which may work for simple checks but often fail to reflect the messiness of real production data. A third option is to do less testing than the team would prefer and hope the change is safe.

None of those options feels great.

Ardent steps into that gap by making it easier to test database code against production like data in an isolated environment. That changes the conversation from “should we trust this AI generated database change” to “can we verify it safely before rollout?” That is a much healthier question for modern engineering teams.

How Ardent makes AI coding agents safer

Ardent’s value becomes clearer when you look at how it frames the workflow.

The company says it replicates a production database into a read replica and then creates lightweight copy on write forks on demand. Those forks act like branches for the database, which is why Ardent describes itself as something close to Git for data infrastructure. In practice, that means teams can spin up isolated database branches, test work inside them, compare changes, and throw them away when they are done.

That model is useful for human engineers, but it is especially useful for AI coding agents.

Agents are powerful when they can try, test, adjust, and verify. They are much less trustworthy when they can only generate code in the abstract. Ardent gives them a safer place to do that verification work. If an agent is cleaning data, testing a migration, validating a schema update, or checking a backfill process, it can do that work on a real database copy rather than a fake environment or a risky production target.

That dramatically lowers blast radius.

Ardent also emphasizes that each clone is isolated at both compute and storage level, which strengthens the safety story further. For teams nervous about production exposure, that kind of isolation is not just a nice technical detail. It is the reason the product is usable in the first place.

Why this matters for modern engineering teams

The most interesting thing about Ardent is that it speaks to a larger shift in developer infrastructure.

For years, a lot of engineering tooling was built around making developers faster. Now the next wave is about making AI accelerated teams safer and more reliable. Speed still matters, but speed without verification creates a different kind of bottleneck.

That is why Ardent’s positioning works.

It is not asking teams to slow down and become more cautious in the old sense. It is saying teams can move quickly and still protect their databases by giving every task, and potentially every coding agent, its own isolated environment. That fits the way modern teams already want to work.

It also helps that Ardent is built around familiar concepts. Branching, isolation, switching contexts, testing changes, and cleaning up temporary environments all feel natural to engineers who already think in Git style workflows. The product does not ask teams to adopt a strange new mental model. It adapts an existing one to the database layer.

That makes adoption easier to imagine.

How Ardent’s positioning helped it stand out

There is no shortage of startups trying to attach themselves to the AI development boom. What usually separates the stronger companies from the weaker ones is clarity.

Ardent has a clear story.

It is not trying to be every kind of infrastructure tool for every possible team. It is focused on database sandboxes for agents. It is tied closely to Postgres. It speaks directly to a painful workflow. It explains the benefit in practical terms: use coding agents on real data with no risk.

That kind of sharp positioning matters a lot.

It helps potential users understand the value quickly. It helps investors see the wedge. It helps readers understand why the company exists. And it gives Vikram Chennai a more credible founder story because the company is built around a real operational problem, not a buzzword heavy abstraction.

Ardent’s Y Combinator backing also adds another layer of validation. YC tends to favor startups that can explain a real problem clearly and show why their approach matters right now. Ardent fits that pattern well because it sits at the intersection of AI, developer tools, data engineering, and infrastructure.

What Vikram Chennai’s success with Ardent says about the market

Ardent says something useful about where the market is heading.

A big part of the AI software wave is no longer about whether models can generate useful code. That question has already moved forward. The more pressing question now is how teams manage trust, safety, and verification as AI becomes part of everyday engineering work.

That is especially true in databases, where mistakes can quietly become expensive.

Vikram Chennai’s work with Ardent reflects that shift. The company is building around an idea that feels increasingly obvious once you hear it: if coding agents are going to work near critical systems, they need safe and realistic environments to prove their output. Without that layer, teams either slow down or accept more risk than they should.

Ardent is betting that database sandboxing will become a normal part of AI native engineering workflows. That is a strong bet because it aligns with how real teams behave. They want automation. They want speed. But they also want to know their systems will hold up when the code touches production data.

Why the Ardent story is getting attention

There is a reason this story is easy to follow.

It combines a strong founder angle, a timely infrastructure problem, and a product that solves something engineers already understand. Vikram Chennai did not build Ardent around a vague promise that AI will change everything. He built it around a more grounded reality: AI can write a lot of code, but database work still needs a safer proving ground.

That idea gives Ardent substance.

It also gives the company room to grow. What starts as a better way to test migrations and schema changes can expand into a broader layer for database verification, production safe experimentation, and AI assisted data engineering workflows. Whether Ardent grows into that full vision or stays tightly focused, the core logic already makes sense.

And that is usually where the best infrastructure startups begin.